Contrary to initial speculation, in the latest Google update, Google’s Medic Update has ramifications that go way beyond the health industry and establishing author expertise.

A year from now we may look back at August 2018 as a pivotal shift in how SEO works, on par with the introduction of Google Panda and Penguin (if less cutely named).

As more hard data emerges, we’re starting to see a clearer picture of which factors played a role in rankings changes, and how the big Medic Update was about much more than just Expertise, Authority, and Trust.

A lot of great websites lost rankings after the Medic update. If you’re among those affected and trying to understand why, we hope this list of influential factors will help you focus your search. If you need further help, let me know! Recovery from the Google Medic Update is possible.

Note: This is an edited version of research we put together for CanIRank Full Service clients. Part 1 explained What is a broad core algorithm update?, this is part 2, What changed in in the Google Medic Update?, and part 3 (coming soon!) explains How to Recover from the Google Medic Algorithm Update.

As a team that combines marketers with data scientists and engineers, we believe a key part of an SEO consultant’s job is to help clients understand how Google’s high-level objectives like “make great content” or “become the leading authority in your field” translate into actual machine-readable signals, and how you can improve your content marketing processes to strengthen those signals while still balancing other business objectives.

This 3-part series on Google’s latest core algorithm update (“Medic Update”) is our attempt to help elevate the conversation around algorithm updates to more of an evidence-based exploration focused on specific algorithmic signals rather than intangible high-level objectives.

Now that we have an idea for what Google was trying to accomplish with the Medic Update, and how they might have gone about making those changes, we can dive into better understanding how the update changed the importance of various ranking factors.

In order to identify which algorithmic signals are correlated with gains and losses in Google’s big Medic update, we identified approximately 100 sites that had seen significant changes in one direction or the other. Most of the examples are medium-large sites due to data availability, but we found sites impacted of all sizes, business models, and industries, including health, financial services, travel, SaaS, coupons, auto, and ecommerce.

Using CanIRank’s SEO Competitor Analysis tool, we analyzed keywords with large fluctuations to see how gaining URLs differed from losing URLs. Based on that preliminary analysis and initial reports in the SEO blogosphere, we came up with a list of 27 potential culprits to analyze further, such as the presence of contact information, quality of an author’s credentials, and link velocity.

The preliminary statistical findings are based on complete data for about a dozen URLs each from 51 different websites, 33 of which saw significant and sustained declines beginning August 1st, and 18 that saw significant and sustained growth during that period. For the remaining sites analyzed our data is still incomplete, but there were already clear enough patterns at 51 completed that we thought it worth publishing before more businesses waste time and money chasing the red herring of Expertise / Authority / Trust.

- Study Limitations

- Ranking Factors Associated with Traffic Gains or Losses

- Other Influential Factors

- Factors that didn’t seem to make a difference

Study Limitations

Apart from the small sample size, the study has a number of additional limitations, and as such should be looked at more as an aggregation of anecdotes than statistically valid research (by comparison, CanIRank’s ranking models are based on analysis of almost 600,000 websites).

We’re not trying to imply weighting or even utilization of specific signals — our objective is to identify possible areas for further analysis and experimentation so that we can guide our clients (and you!) in how best to take advantage of Google’s new ranking algorithm.

As we’ve stressed before, correlation is a terrible way to gauge the relative impact of specific SEO ranking signals because different URLs rank for different reasons. Each of those 10 blue links on page 1 are playing the game by a different set of rules. Smart SEOs ignore those “Google’s 200 Ranking Factors Finally Revealed!” clickbait posts and instead spend a lot of time on competitive analysis to understand which factors matter for the specific keywords they’re interested in.

As applied to diagnosing and reversing traffic declines, this means that there was no single “smoking gun” that caused all the changes we see here (not even something high-level like E-A-T or content quality). Instead, we took the time to understand what the likely culprit was for most of the declining sites (and there was almost always at least one glaring issue).

For example, SelfHacked.com scored very high on measures of content quality, expertise, and authority, but they have some fundamental technical SEO issues. It’s hard to beat LonelyPlanet for content quality and expertise in the travel industry, but they have some hard to distinguish sponsored content that could be seen as deceptive, as well as thin UGC.

Situations like that don’t lead to strong correlations for any one factor; that’s the nature of a “broad” update.

Some of the factors we looked at were subjectively evaluated by our team of SEO consultants using common guidelines similar to Google’s Search Quality Evaluator Guidelines. Subjective evaluations are a recipe for bias, but given our team has no particular interest in any one factor “winning”, at least it’s plausible that we were all inaccurate in a randomly distributed way.

Lastly but most importantly, since none of these sites are CanIRank clients, we’re estimating the change in organic search traffic using 3rd-party SEMRush data, which unfortunately isn’t entirely accurate, especially for small websites and during times of high volatility. We did source some gainers/ losers by reading comment threads where webmasters claimed they were impacted, but apart from that we have no knowledge about the organic traffic performance of these websites beyond the publicly-available SEMRush estimates.

Ranking Factors Associated with Traffic Gains or Losses

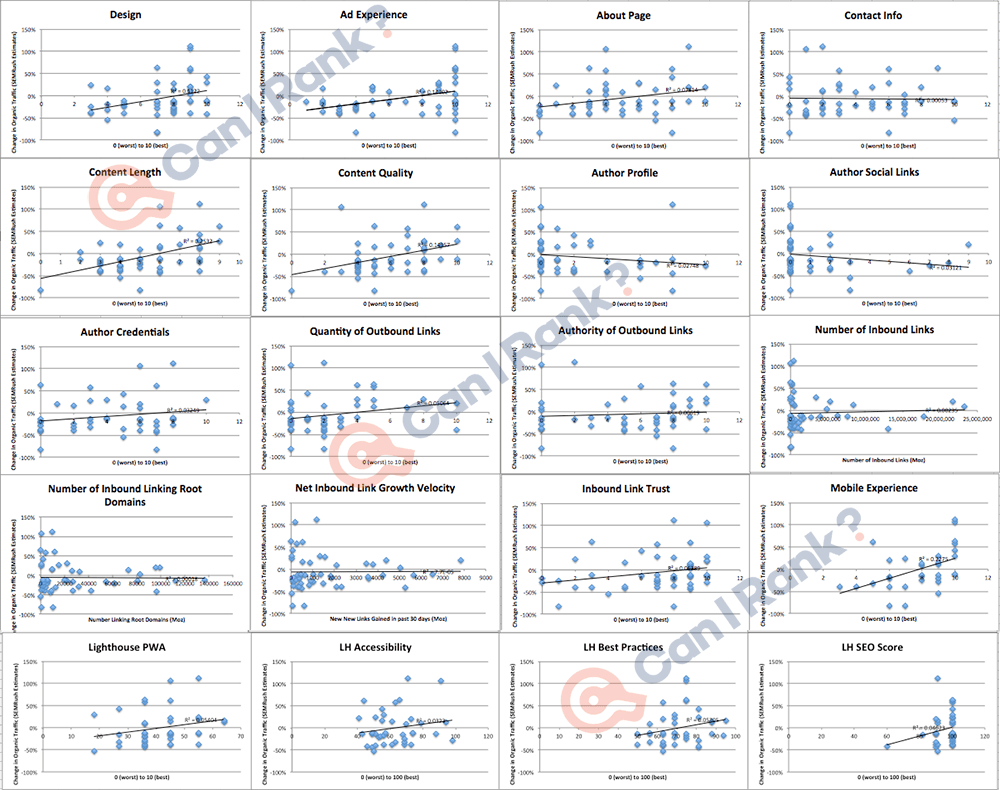

Let’s get right to the good stuff: data! Here’s a scatterplot matrix of 20 numeric factors we evaluated at the website level:

A steep upward sloping line suggests that factor was associated with rankings increases, and tightly clustered dots suggest good fit. Flat lines suggest that particular signal had no impact on rankings, and downward sloping lines mean sites scoring high on that factor were actually less likely to improve their rankings. For my fellow data nerds who like pretending SEO research is more valid than it really is, I also included correlation coefficients, R2, and P-values.

Let’s examine some of the most influential factors in more detail.

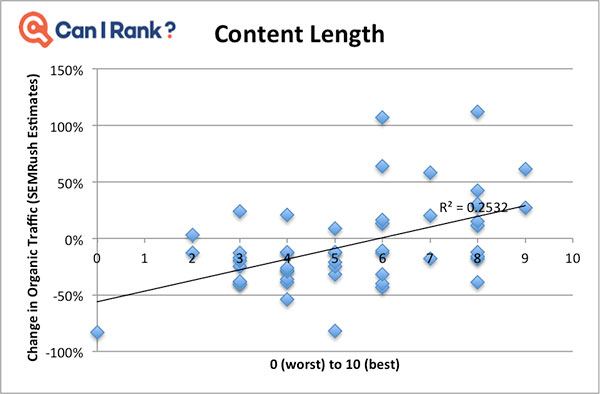

Content Length

- Correlation w/ change in Organic Traffic: 0.50

- P-Value: 0.00017

- Example Sites Impacted: SelfHacked.com, Khan Academy, Deezer.com

Note that we looked at content length relative to other ranking content in that niche, not absolute content length. I think that’s an important distinction, because as Google themselves have pointed out, you don’t need necessarily want a 5,000 word answer to “what time is the Super Bowl?”

We knew already that there is a weak correlation between search ranking and content length, and I believe that’s stronger here due to the predominance of sites in niches like finance and health where in-depth content is preferred.

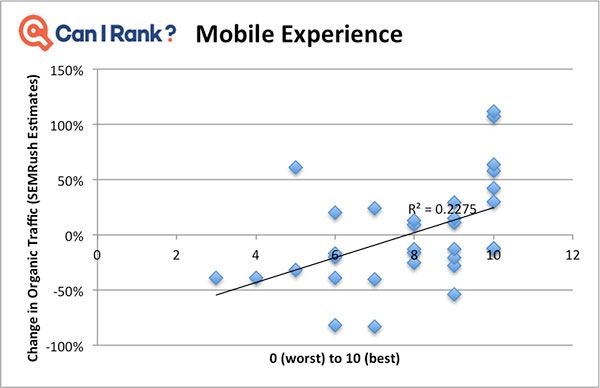

Mobile Experience

- Correlation w/ change in Organic Traffic: 0.48

- P-Value: 0.0058

- Example Sites Impacted: Prevention.com, SelfHacked.com, MSN.com

Nearly all of these sites passed Google’s mobile friendliness test with no errors. Instead, we subjectively evaluated mobile experience by looking at things like usability (font size, contrast), content visibility, and touch target size. Since we’re comparing these factors against changes in organic traffic for Google’s desktop index, I was surprised to see this strong of a correlation. I suspect that’s because sites executing well in this area are generally better at UI and web development, which may be measured by other signals.

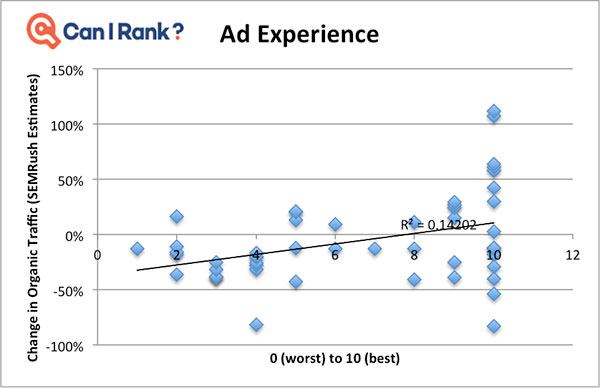

Ad Experience

- Correlation w/ change in Organic Traffic: 0.38

- P-Value: 0.0064

- Example Sites Impacted: ThePaleoDiet.com, DrugAbuse.com, OrganicFacts.net, FleaBites.net, NaturalLivingIdeas.com, VeryWellFamily.com, TheBalanceCareers.com, TheCarConnection.com, Health.com

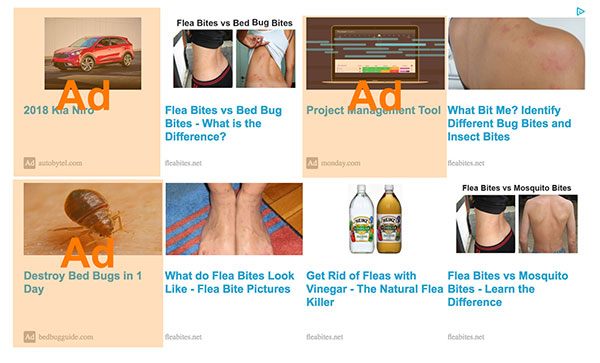

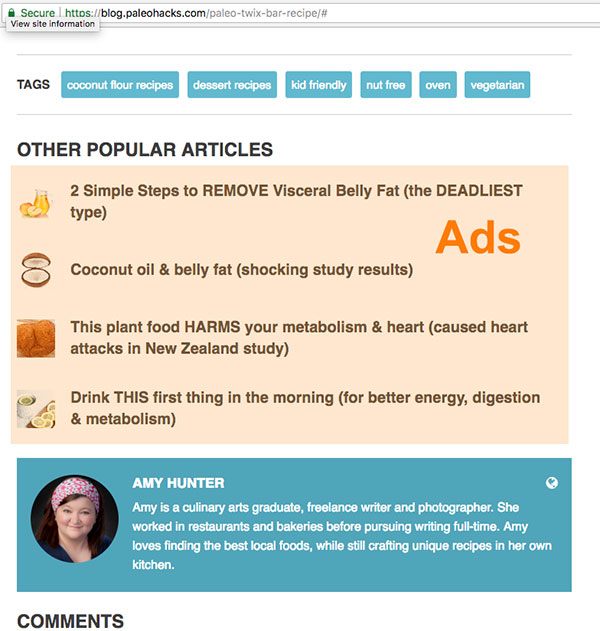

With all of the big names caught up in this update, some of the big traffic declines were really perplexing — until I disabled my ad blocker. Wow, what a scary place the internet has become to the unprotected.

Of course, we’ve heard about Google penalizing excessive ads before, but like we explained in part 1, August’s core algorithm update wasn’t so much about introducing new signals as it was shifting the weightings for certain query intents.

In particular, Google seems to be cracking down on deceptive ads that are too easy to confuse with content. For many of the most dramatic rankings drops, deceptive ads are the likely smoking gun:

ThePaleoDiet.com

FleaBites.net

PaleoHacks.com

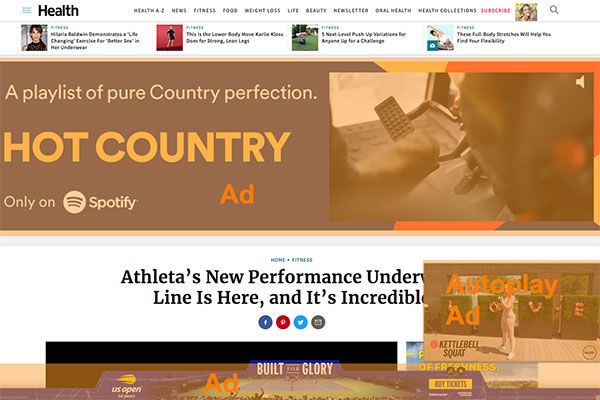

Excessive ads also appear to have been a strong negative factor for some sites. Although we didn’t break out stats by ad type, some of the biggest sins appear to be obscuring content with persistent overlay ads, pushing content below the fold, and autoplay video ads (thank you for that one Google!!!).

Here are a couple examples of excessive ads on authoritative sites that saw rankings declines:

PharmacistAnswers

Health.com

MensHealth.com

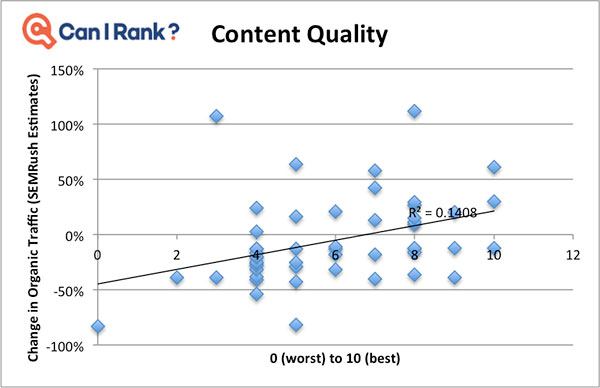

Content Quality

- Correlation w/ change in Organic Traffic: 0.38

- P-Value: 0.0067

- Example Sites Impacted: KetoDash.com, OrganicFacts.net, FleaBites.net, BonsaiMoney

Content Quality is amongst the most subjective factors we looked at, and we mainly included it to appease all the vainglorious folks who emerge from obscurity at times like this to say helpful things like “just write great content and you’ll never have to worry about a Google update again”.

Several sites with high-quality content saw significant drops, including SelfHacked.com, MyProtein.com, TheBalanceCareers.com, TheCarConnection.com, LonelyPlanet.com, and Crutchfield.com

In any case “Content Quality” isn’t a single signal but some combination of lower-level factors like relevancy, writing quality, satisfying the query intent, and presenting the content well. Although we didn’t collect data on all of these individual factors, we did notice that content on gainers tended to have a few things in common:

- Plentiful images, videos, and/or illustrations

- Well-structured content, such as tables of contents, headers, bullet points, pull quotes, and metadata. Examples:

- Lots of dofollow outbound links to quality, relevant sources. The number of external links had a 0.23 correlation, almost as high as the relationship with Inbound Link Trust. And as any link builder will tell you, it’s a heck of a lot easier to add a high authority outbound link than a high authority inbound link.

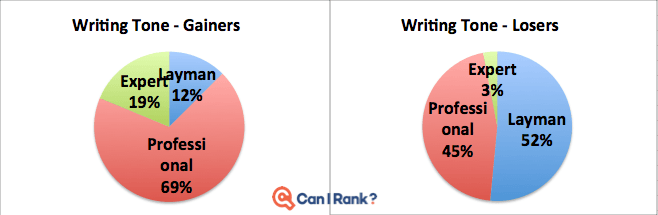

Natural Language Processing has continued to evolve at a rapid pace the past few years, and while I don’t think we can yet algorithmically determine if a piece of content is beautifully written or useful or interesting, it certainly is possible to distinguish the casual “layman” tone of a mommy blogger (for example) from the professional tone of a journalist or the dense jargon-heavy tone of a scientist or other specialist.

I’m not saying Google uses tone as a signal and I definitely don’t think it would be a good way to establish expertise (one might even say that the best experts are those who are able to explain something in an accessible manner). However, it does seem that this update tended to shift things up the seriousness scale a bit:

We also looked at various automated readability scores like Flesch-Kincaid grade level, etc. but didn’t find any relationship.

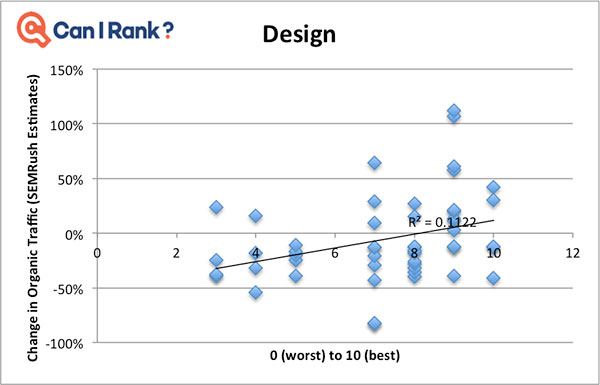

Design

- Correlation w/ change in Organic Traffic: 0.33

- P-Value: 0.0163

- Example Sites Impacted: ESIMoney.com, OrganicFacts.net, FleaBites.net

As with Content Quality, we’re using a subjective human evaluation of design as catch-all for an entire category of potential signals. It was hard not to notice that some of the gaining sites had incredibly usable, elegant, and modern user interfaces. DietDoctor, Startups.co, Consumerism Commentary, Drugs.com, and LonelyPlanet were some of the highlights for me.

How might we algorithmically distinguish sites like that from something that looks like it was built on Geocities?

It’s certainly plausible that given enough training data, a machine learning algorithm could learn to distinguish attractive, usable designs from cluttered, ugly ones, perhaps by looking at signals like a limited color palette, consistent styling, sufficient white space, appropriate visual hierarchy, etc.

Plausible…but not likely. My hunch is that Google doesn’t need to do this because good-looking sites are already being rewarded by so many other signals that are both more robust and easier to calculate, such as links, engagement, and brand metrics.

It’s also likely that businesses who care enough about their website to invest in a great design also care enough to invest in great content, a good SEO agency, consistent outreach, etc.

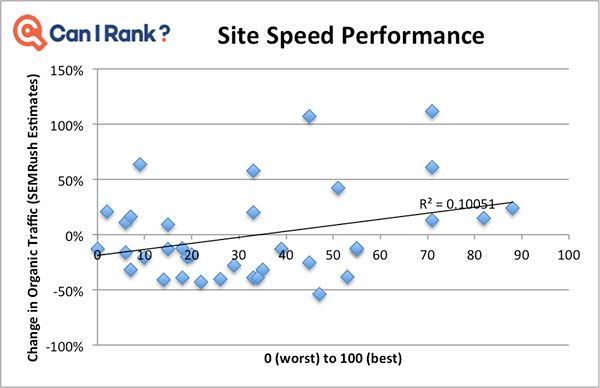

Site Speed

- Correlation w/ change in Organic Traffic: 0.32

- P-Value: 0.060

- Example Sites Impacted: Vaping360, BestProducts.com, TheCarConnection.com

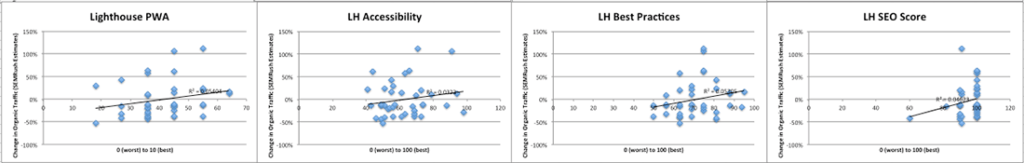

Though not statistically significant, there was a modest correlation between rankings improvements and site speed as measured by Google’s Lighthouse tool. I wouldn’t give too much weight to this as sites that perform well often execute well in other areas too. However, site speed is a worthy goal regardless of whether or not it brings SEO benefits!

For the Google Lighthouse fans out there (you’re among friends!), below are the graphs for the other Lighthouse scores. Although none reached statistical significance, the Progressive Web App and Best Practices scores had the highest correlations:

If your site scores low on automated performance measurement tools and some unscrupulous development agency is trying to convince you that dropping a few hundred Gs on a site overhaul will help your cover your lost rankings, I’d be skeptical. After all, even Google’s own Grow.google — which increased organic traffic 107% in this update — only scored a 45 on their own performance tool.

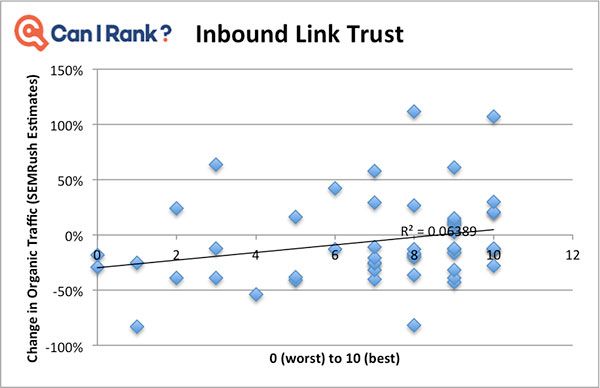

Inbound Link Trust

- Correlation w/ change in Organic Traffic: 0.25

- P-Value: 0.07

- Example Sites Impacted: VaporDNA, BonsaiFinance, AxonOptics, KetoDash

We’re below the level of statistical significance now, but it’s impossible to talk about expertise without mentioning links. Despite their many shortcomings and abuses, links have endured over 20 years as the most reliable signal of a web page’s importance, which is really remarkable.

When one looks at the link graph, there are millions of little clusters around each industry and affinity group. Bouldering websites link to other bouldering sites, cosplay sites link to cosplay sites, and both groups are perfectly happy that they almost never link to each other.

In certain situations those in-network links can be really helpful — who knows the best bouldering sites better than another enthusiast?

But in other situations, close-knit affinity groups can create a sort of “link filter bubble” where a bunch of closely-related sites essentially cite each other as sources.

For example, anti-vaxxers are much more frequently discussing and linking to vaccine-related content than the silent majority who trust vaccines.

To take a less extreme example, many in the paleo community love discussing and sharing the latest nutrition research, generally linking to other paleo blogs (and CrossFit workouts, of course).

Another closely-interconnected community is the green/natural/alternative health space.

In each of these examples, you have a passionate subgroup that is much more vocal online (read: linking more) than a silent majority that reflects mainstream viewpoints.

It seems that in the Medic Update Google decided they couldn’t risk passionate alternative viewpoints overwhelming the search results on sensitive YMYL topics.

Seems that Google declared war on any natural health publishers that offer solutions other than big pharma. Wrecked: https://t.co/CMgK8aebD4, https://t.co/7rynuQvKom, https://t.co/tEZ5VABw7l, https://t.co/lKUSkUwPJR, https://t.co/BSwgHOnW68, https://t.co/ypVi9WupJq pic.twitter.com/38sTEReUHJ

— Tony Spencer (@notsleepy) August 6, 2018

Many of the alternative health sites that declined were leaders in their niche, like DrAxe, SelfHacked, Mercola, or WellnessMama. However, much of their authority came from other similar sites, and rarely did they manage to break into earning links from sites with extremely high TrustRank.

Sites that gained in the latest Google algorithm update, like Examine.com, UpToDate.com, HealthLine, MedLinePlus.gov, and Drugs.com all managed to earn a significant number of links from mainstream, high TrustRank websites.

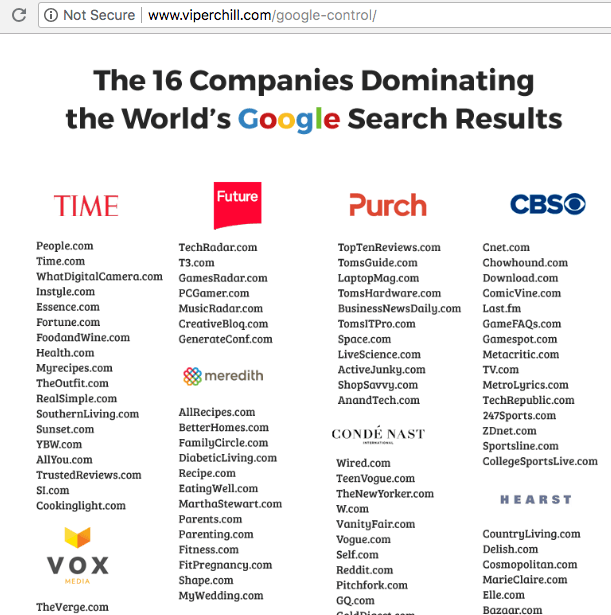

However, there’s also another possible explanation that the Medic Update isn’t targeting alternative viewpoints at all: it’s targeting networks.

Google does sometimes react to bad press, and Glen Allsopp AKA ViperChill did an excellent exposé showing how media conglomerates interlink their huge networks of sites to boost authority and dominate search rankings:

It’s hard to imagine Google wasn’t already aware of this problem, but having a prominent SEO point out that they’re being pwned by 131 year old media conglomerates probably poured salt in the wound, especially for a bunch of fixie-riding, vinyl-listening, SF hipsters like Google employees (just kidding, actually I don’t think I’ve met a single Hipster Googler).

Coincidental or not, a lot of sites that declined in the August Core Algorithm update were part of a network. To name a few:

- BestProducts.com: -21%

- Prevention.com: -57%

- Health.com: -13%

- LouderSound.com: -36%

- VeryWellFamily.com: -26%

- MensHealth.com: -20%

- WomensHealth.com: -17%

- TheBalanceCareers.com: -21%

- TheCarConnection.com: -16%

- TrustedReviews.com: -19%

- ExtremeTerrain.com: -12%

- ActiveJunky.com: -18%

- TomsITPro.com: -29%

- EatThis.com: -34%

- VeryWellFit.com: -29%

Of course, most big online publishers are part of a network, so this is far from conclusive.

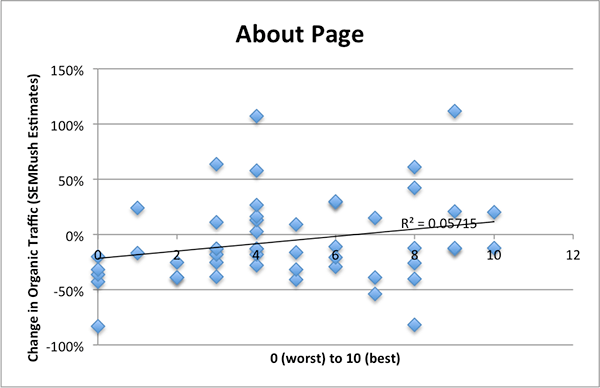

About Page

- Correlation w/ change in Organic Traffic: 0.24

- P-Value: 0.09

- Example Sites Impacted: DrugAbuse.com, LouderSound, NaturalLivingIdeas, MensHealth

Beefing up your About page to emphasize credentials, authority, and expertise is one of the actions frequently recommended to fix Medic traffic declines by SEOs focused on the EAT aspects of the update. Although not statistically significant, About page quality did have a modest positive correlation with ranking improvement.

We subjectively evaluated the quality of About pages specifically with an eye towards how well they communicated expertise and credibility. While improving your About page is an excellent idea for many reasons, the difficulty in objectively scoring About pages underscored my skepticism over its validity as a machine-readable signal.

Let’s look at a few specific examples: Men’s Health doesn’t have an About page at all (and would an About page increase credibility of a household name?). LouderSound doesn’t have an About page, but links to their parent company. Khan Academy and REI have weak About pages, but an entire section of “about” content.

At a content level, machines can’t “read” expertise in the way they can keyword relevancy. Even if you built an “expertise-scorer” that looked for things like professional and educational experience, awards, press, etc. — it would be easily fooled. And many of the most credible experts in every industry wouldn’t bother putting that kind of stuff in their About pages, either because they feel their reputation precedes them, or simply because they don’t like self-promotion.

Bottom line: yes, Google cares about your expertise and authority. But they’re looking at off-site signals to determine that, like links, brand mentions, branded search volume, and possibly social followers. You can’t just declare yourself an expert on your About page and expect Google (or anyone else) to believe it.

Other Influential Factors

Query Intent Fit

- Example Sites Impacted: REI.com, Crutchfield.com, BodyBuilding.com, DrugAbuse.com, DrAxe.com, MensHealth.com

I hesitated to even include this as a factor because it’s more of a unifying hypothesis than a signal. We are working on collecting more data to determine what it means to have a good Query Intent Fit, and what can be done on a practical level to reinforce fit.

If early anecdotes are indicative, I would not be surprised if these are the types of questions that every good SEO will soon be grappling with on a daily basis.

So what is Query Intent Fit, and why do we feel it has become increasingly important following this update?

First, many of the health site fluctuations can be explained through this lens. If I search a serious health query like “symptoms of colon cancer”, it’s extremely important that I receive scientifically accurate, comprehensive, and unbiased information.

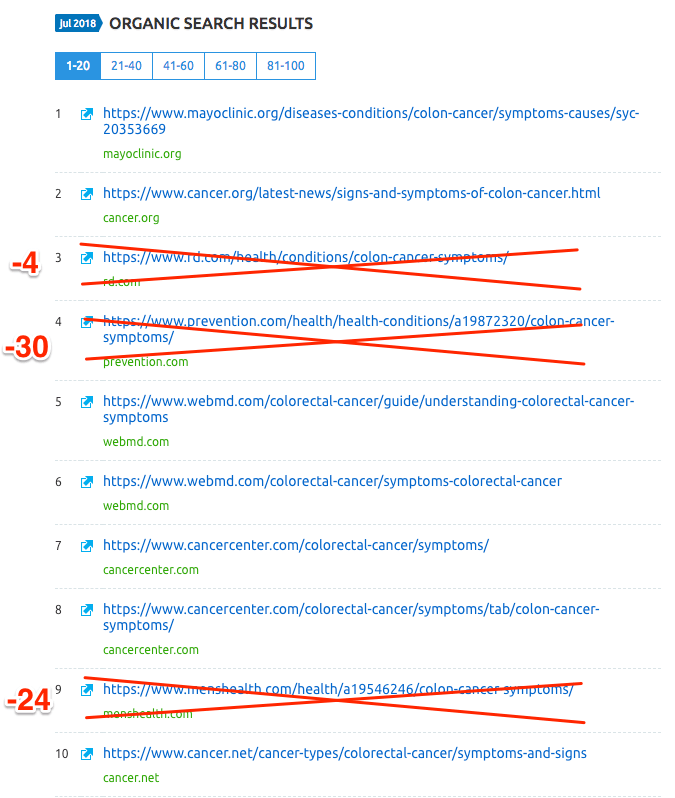

Sure enough, the declining sites for queries like this tended to be more lifestyle or alternative health oriented, and less scientific:

The query intent hypothesis also helps explain why high-quality ecommerce sites like REI, Crutchfield, or BodyBuilding.com declined despite note having any glaring issues.

For some time, many marketers have considered it a best practice for ecommerce sites to add high-quality informational content to their websites. Sophisticated online consumers (particularly millennials and younger) want to know the story and “deeper purpose” behind that purple romper dress they’re buying. Where was it made, how does the company treat their employees, what is the company’s philosophy on fashion or environmental issues, can I wear this to Coachella?

Sites like RedBull, Patagonia, Huckberry, Madewell, FreePeople, REI, HomeDepot, or BetaBrand connect with their customers so effectively they start to blur the line between store and media destination.

Although it wasn’t rewarded in this update, I think you can make a strong case that if I’m trying to choose the right climbing rope or setup a home theater, the content on REI and Crutchfield will satisfy my query intent better than a catalog page. An educated consumer makes a better purchase decision. And the fact that these sites convey expertise gives shoppers greater confidence in their product quality.

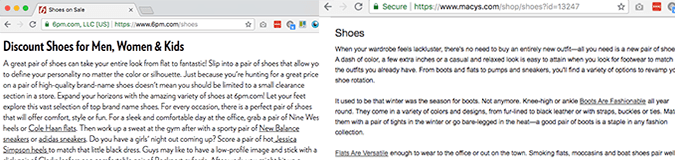

But in other instances the value from mingling commercial and informational content is less clear.

If I search “shoes” I probably don’t need a 500-word explanation of shoes like I get from Macys or 6PM.com — I just want the online or local shoe stores with the best selection, pricing, customer-friendly policies, and shopping experience.

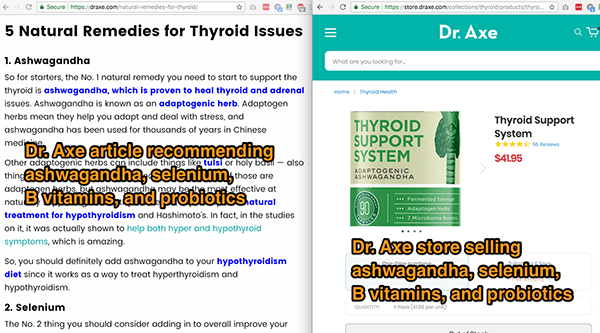

If a store sells herbal teas, they may not be an impartial source for an article on “How herbal teas can cure your migraines”. Should Google trust a site like Dr. Axe, Mercola, or ThePaleoDiet.com less because they have a store selling solutions to some of the health issues they discuss?

What about a natural treatments blog that monetizes via affiliate links to purchase the natural cures they discuss? Or a medical site selling ads to pharmaceutical companies? There’s clearly a spectrum here.

Regardless, once it’s time to make a purchase, for most people the educational benefits of content take a back seat to considerations like price, customer-friendly policies like free shipping and easy returns, reputation, and ease of purchase.

“Thanks for the info Crutchfield, but I’m still going to buy this receiver from Amazon so I save $7 and get it in 2 days.”

That would explain why sites like Costco, Kohls, Amazon, Ebay, and B&H Photo all appear to have gained in this update. Nobody gets excited about linking, sharing, or even reading what Costco has to say about that 5 gallon tub of cookie dough. But when it comes to the American Dream of buying a ton of crap we don’t need at fantastic prices, these sites make for satisfied searchers.

It also explains why ecommerce sites with best in class content are no longer being rewarded for that, as Brian Chappell noted in the medical device space:

Hi @dannysullivan , per your request to post this publicly I have put together 1) my personal content creation process 2) insights into the vertical that I saw damaged by this update. Any feedback is welcome: https://t.co/V4y7iAxuxk

— Brian Chappell (@brianchappell) August 10, 2018

These are not situations traditional information retrieval tools are designed to address. Perhaps the challenge of executing well explains why it’s also easy to find examples that contradict the query intent hypothesis.

For example, while REI and Crutchfield with their extremely useful content both lost ground in this update, Macys and 6PM.com are both still ranking well with their pointless 500-word definitions of shoes.

Many sites that integrate a store with content and also (arguably) lack expertise don’t seem to have been impacted by this update either, including Goop.com, FoodBabe.com, MuscleAndBrawn.com, and UrbanDictionary.com. Other major ecommerce retailers with high quality integrated content (including HomeDepot.com and Petco.com) didn’t see the declines that REI and Crutchfield did. Two of the biggest gainers in the health niche, Examine.com and DietDoctor.com, are also ultimately selling a product (how else could they forego advertising and produce such high quality content?), albeit in a much more subtle way.

Clearly there’s more going on here than can be explained by a simplistic hypothesis. For more background on query intent, see part 1 in our algorithm update series. Further research will revolve around how shopping and ecommerce can safely be integrated, and which signals appear to support shopper satisfaction.

Technical SEO

- Example Sites Impacted: MyProtein.com, LonelyPlanet, SelfHacked.com

With all of the early talk about Expertise / Authority / Trust, many SEOs forgot about their fundamentals.

If we think about a Broad Core Algorithm update as primarily being about adjusting weightings for different query intents, it makes sense that technical SEO errors would play a significant role in the drops.

For example, site-wide Quality scores like Panda have always been impacted by technical SEO issues like thin content and duplicate content. If Google decides that a YMYL result, for example, must be on a high quality site, websites with mild technical SEO issues that previously went under the radar would see big drops.

And that’s exactly what happened.

The most clear-cut example is probably SelfHacked.com, which according to SEMRush dropped almost 40%. Given that SelfHacked’s articles are thousands of words long, meticulously annotated, chock-full of helpful information, and written mostly by PhDs and others with a high degree of expertise, I imagine they’re getting pretty tired of all the SEO gurus telling them this update is about E-A-T.

But if we assume that health-related sites now have a higher bar for Website Quality, the riddle becomes more clear. Despite nailing all of the “hard stuff” like content quality and great links, SelfHacked made a basic technical SEO error and didn’t configure their WordPress properly to prevent indexing of images on individual pages. As a result, they have hundreds of extremely thin image pages in Google’s index:

https://www.selfhacked.com/contact/dana/

https://www.selfhacked.com/t1/

https://www.selfhacked.com/p5p/

https://www.selfhacked.com/picture1/

https://www.selfhacked.com/header-logo/

https://www.selfhacked.com/about/profilepictures_0003_evguenia/

https://www.selfhacked.com/stat-gray/

If the folks at SelfHacked read this, fix those (and a couple other issues), and I think it’s highly likely your rankings will recover.

Technical SEO also appeared to play a role in the drops of other high quality sites like Khan Academy (-13%), Lonely Planet (-12%), and My Protein (-40%).

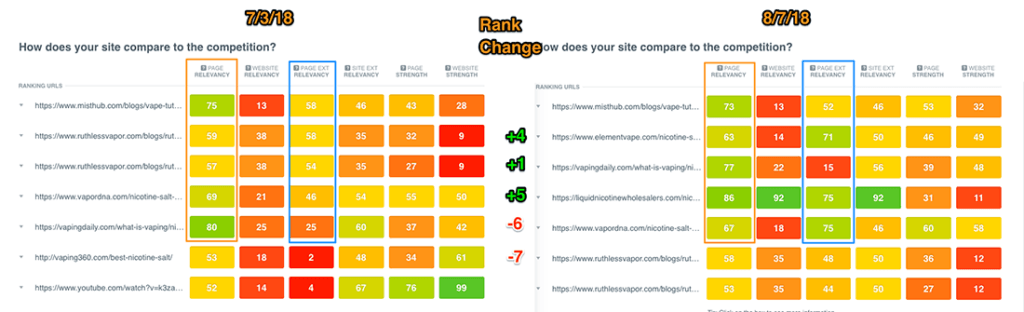

Semantic Optimization

- Example Sites Impacted: Most gaining sites did a good job with this on keywords where they gained

Although we didn’t collect optimization data because we weren’t analyzing sites at the keyword level, in our work for clients we have noticed a lot of examples where semantic “meaning-based” optimization techniques saw significant gains.

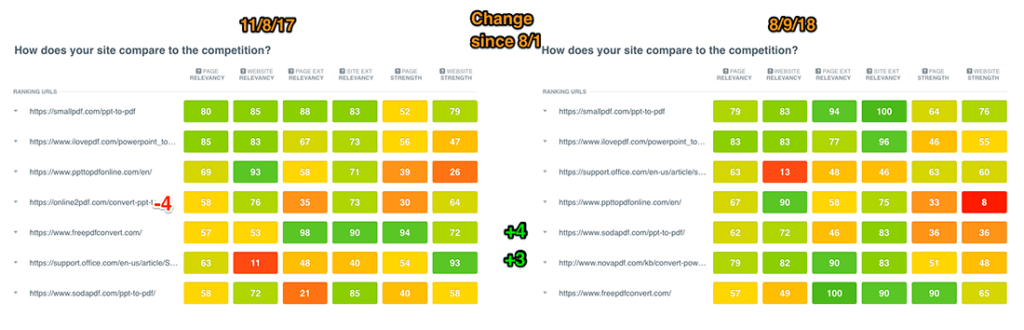

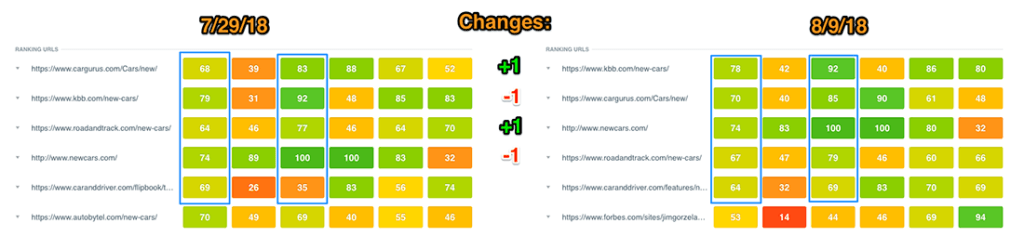

After the big Medic Update we’re seeing a lot more pages with high CanIRank Page Relevancy scores amongst the top results. Check out the change in the first and third column in these before-and-after competitive analyses:

“powerpoint to pdf”

In all 3 cases, pages with higher Page Relevancy scores leapfrogged pages with lower Page Relevancy scores.

Unlike other SEO software that scores on-page SEO based on keyword usage, CanIRank’s Page Relevancy scores are a semantic “meaning-based” measure of optimization. Google has been moving more and more towards semantic relevancy since the introduction of Hummingbird 5 years ago, the trend has intensified with the rise of voice search, and with the August update they appear to have taken yet another big step in that direction.

We’ll dive more into semantic optimization best practices in our guide to Medic recovery, but in the meantime know that these scores are based on the usage of Related Terms and entities that help search engines understand what the page is about.

Related Terms are sometimes mistakenly referred to as “LSI keywords” by SEOs who want to sound smart, in reference to a specific algorithm (Latent Semantic Indexing) that is almost 40 years old and has likely never been used by Google or any other web-scale search engine.

The Revenge of Anchor Text

- Example Sites Impacted: Ask your friendly neighborhood Black Hat

One group that doesn’t appear to have suffered in the August Core Update is Black Hat SEOs. We’ve seen some significant (and unfortunate) gains in low quality sites that aggressively use keyword anchor text (see the third column in the competitive analysis tables above).

In the majority of cases, the keyword anchor text came from PBNs or obviously paid links.

This seems like a reversal of the trend over the past few years, where keyword anchor text was becoming less important (and even harmful if overdone, thanks to Google Penguin).

I would show you examples, but you’d probably throw your laptop out the window in frustration over the inequity and hypocrisy of Google making things so much harder for businesses who follow their rules while rewarding those who don’t.

Factors that didn’t seem to make a difference

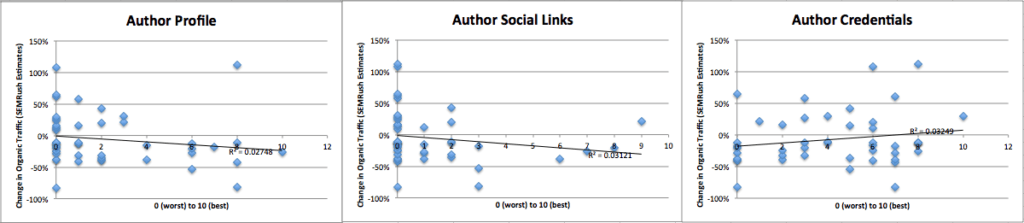

Author Profiles or Credentials

- Correlation: -0.17 (Profiles), -0.18 (Social), 0.18 (Credentials)

- P-Value: 0.25 (Profiles), 0.22 (Social), 0.23 (Credentials)

Some of the early articles focused on E-A-T recommended things like hiring the very best experts you can afford to write your content, and then creating robust author profiles detailing their credentials, expertise, and social media profiles.

So for everyone wondering how they were going to afford paying an MD $700 / hr to write paleo brownie recipes, I have good news: both robust author profiles and links to author social media profiles were actually negatively correlated with rankings improvements in the August update.

Author credentials had a small positive correlation.

However, none of these correlations were statistically significant, and a good SEO should be able to help you identify more robust expertise signals than author profile pages, credentials, or social media profiles.

If you’re still convinced that expertise and author credentials are a factor, consider that a large number of sites with questionable science or outright fake news did NOT see a decline in this update: Goop.com (+10%), DoctorOz.com (+15%), NaturalNews.com (+7%), FoodBabe.com (-3%), Breitbart.com (+352%), YourNewsWire.com (+35%), Vaccines.new (+3%), or Vaxxter.com (+10%).

If this is an attempt to reward author expertise, Google missed the mark.

Remember, Google’s Quality Rater Guidelines measure outputs, not inputs. In other words, the Quality Rater Guidelines tell us the types of sites Google wants their signals to boost, not the signals themselves.

Of course, that doesn’t mean that adding robust author profiles and hiring authors with a high degree of expertise in your field is a bad idea — quite to the contrary, in many cases. But if you’re looking for a more direct path to recovering your lost rankings, you’ll have better luck if you consider the inputs search engines are looking for as well as the outputs.

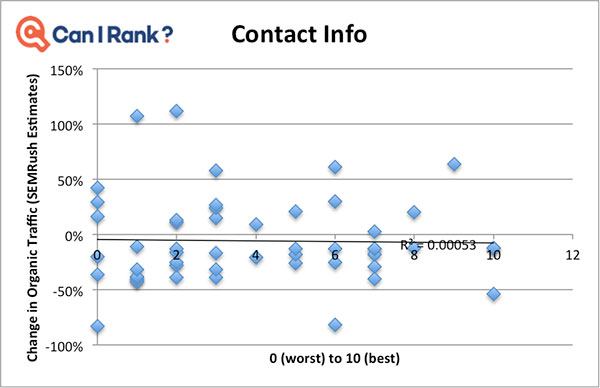

Contact Information

- Correlation w/ change in Organic Traffic: -0.02

- P-Value: 0.87

Another recommendation from the E-A-T crowd was to add contact information on every page, or at the very least have a robust Contact Us page with phone, email, physical address, etc.

This, too, is a fine idea but not something that seems to have impacted rankings during the latest update.

Google does have an identity layer, but as local SEOs will tell you, it’s determined by a lot more than your Contact Us page. It may be that the identity layer played a role in this update for certain sites. For example, we noted a handful of non-US based ecommerce sites losing rankings in US Google.com. This fits with the “Query Intent” hypothesis: location doesn’t matter much if I’m researching measles, but it does if I want to buy a new mountain bike. Next time someone asks you, “What is the Google Medic update?” we hope you’ll have enough data to answer accurately.

Up next: how to recover from the Medic Update?

In the third and final post in our series on Google’s August Core Algorithm Update AKA the Medic Update AKA the Query Intent Update, we will get into specific action steps you can take to identify which of the above signals may be responsible for your site’s decline, as well as what you can do to reverse the damage and recover your lost rankings. Diagnose, fix, and repair any damage done by the Google Medic update.

This (and your part 1) were incredible.

Aside from the post on rankranger about different query intent/conflicting purpose of website (shops pushing their own products via informational content), this is the only post that’s actually dug in to data and offered some genuine analysis.

So many blogs are now just echoing Marie’s initial EAT thoughts from earlier in the month. It’s refreshing to read something different that looks at sites from each angle.

Looking forward to Part 3.

Thanks Mike! I also enjoyed the RankRanger post, as well as Glenn Gabe’s analysis: https://www.gsqi.com/marketing-blog/august-1-google-algorithm-update-analysis-and-findings/ Remarkably, despite this being one of the biggest updates in a while, it seems that almost everyone else just accepted the EAT hypothesis without doing any analysis of their own.

Interesting info. I have seen too much B.S already about Author profiles and the likes. I mean, how do you think Google can understand a damn “Author” expertise?

My best bet is that for most of YMYL, Google’s quality raters simply submitted a list of poor quality sites in their opinion and Google used that to build a model which then downranked sites automatically for keywords they consider YMYL. Leaving the low authority guys that weren’t seen, to dance to the top.

Agreed author identity is much noisier than website identity.

Thanks, I’m rarely impressed, but that’s the best analysis of this update I have read. I have read many! Data, even if it’s not a statistically valid research, is much better than the wild speculations published elsewhere or chatter on forums.

Vielen dank, Florian! Agreed, some data is infinitely better than no data.

Excellent article Matt. Really insightful and pretty much in line with my own findings. No mention of domain age that I can see however, and it seems to me that a lot of older sites (even with poor content) have made gains that are out of the ordinary. Not across the board of course, after all this is SEO, but a factor worth looking at. In my research , many sites <2 years old had taken hits against more established competitors, regardless of their backlink profile (yes, I analysed the subject sites which were the top 3 movers *up and down* in 3 different niches).

Andy

Thanks for adding that Andy! We haven’t looked into domain age at all, but I imagine some of the newer sites that went from 0-60 thanks to being part of a media conglomerate (BestProducts.com, TheBalanceCareers, VeryWellFamily, etc…) would register as a bit of an anomaly in that regard.

Truly a terrific post. I’ve really enjoyed reading it and diving into some of the sites which didn’t come up during my own brief analysis.

The one area I’m not totally convinced by – but really eager to be convinced on – is the analysis of selfhacked.com.

The website isn’t run by anyone with any expertise in the field beyond their own hobbyist enthusiasm (self-described as “a journey of self-experimentation”). One the articles written by Joe – Health Benefits of Semen https://www.selfhacked.com/blog/unhealthy-ejaculate/ – is certainly in-depth, but isn’t back up by any authority (Joe doesn’t display any on his own author page or the About page of the site, nor on his qualifications in his LinkedIn profile. The article isn’t peer-reviewed by anyone either.

To make matters worse, almost all of the ads on the right side are external owned properties, effectively affiliate schemes.

Which begs the question, would Google trust this information to users? Would they deem it or the author credible enough to link users to it? I’m not convinced.

There’s certainly more in the Medic Update than author authority (I wish I’d explained more in my own post that brand authority, topical authority, and topical linking played a part too), but I think it must certainly play a part.

Thanks for the comments Dale! I read your post as well (here’s the link for anyone else who sees this: https://exposureninja.com/blog/what-is-medic-algorithm-update/) and definitely appreciated how much research and effort you put into creating a data-driven exploration of the topic – one of the few who did that for this update! My skepticism over author reputation as a signal centers on the technical hurdles of reliably evaluating something like that. Since I published this post, Google’s John Mueller confirmed that they are not measuring reputation at the author level: https://twitter.com/glenngabe/status/1032237657957511168

You can’t argue with data! Thanks for the post Matt.

Glad you found it insightful Matthew! Would love to hear any thoughts or additions you might have.

I agree with your analysis, for the most part it was about Query Intent. I am one of those that was hit pretty hard, the thing is I lost keywords which were ranking on pages that were totally irrelevant for those queries.

Say a page is talking about tennis shoes but then it also ranks for gold shoes, and running shoes. I lost most of those queries that were on these pages since they were not satisfying searcher intent. Another thing I noticed in the March update was that some of my pages started ranking for totally irrelevant queries which were bouncing all over different pages for several week. I think that was Google testing out how accurate their systems were.

I tend to rank for keywords that are now relevant to the pages they are on and it seems I will have to write pages on those lost keywords, If I want to ever rank for them again

Thanks for sharing Sammy!

How do I subscribe to get notified of part 3?

The easiest way would be to follow @canirank or myself on Twitter (https://twitter.com/matt_bentley) or LinkedIn (https://www.linkedin.com/in/mattbentley)

This is literally what I’ve been begging for since about August 2 so I couldn’t be happier right now, despite the fact that the bell is most likely tolling for sites like mine (a smaller, more focused version of Dr. Axe and the other big alternative health sites hit hard). What Google has taken away, surely they won’t give back. I’m very anxiously awaiting Part 3 and can’t thank you guys enough. It seems like you’re sitting on a gold-mine of opportunities to help the big players recover.

Glad to hear you found it useful Justin! If it’s any consolation, this update impacted big and small alike.

But why?

I did my homework to find why, especially that one of my site lost 10 percent. That was bad new considering the investment. The whole thing was based on EAT and YMYL thing. My research was to find the formula that Google was using. To cut the whole story short of methodology, hypothesis, data analysis, and conclusions, I just took 20 sites that registered gain and 20 that lost. I looked at the following: presence of EAT and YMYL for those sites that lost and gained. I could not find much difference and I could not figure out why some sites lost traffic yet they had good EAT and YMYL. I carried out more digging for information and found alarming results. Those sites that have broad coverage of topics such as prevention.com lost more traffic. Those sites that covered one topic in depth such as diabetes.org.uk recorded high gain. Still, some sites with broad topics such as health24.com recorded traffic gain. So, my question is: what is going on? Conclusion: It is hard to express EAT and YMYL in a broad covered topics. When you are talking about HIV/AIDS, Arthritis, Diabetes, Weight Loss in one domain, you need to create department for authors for each as it is not possible to have one doctor for diabetes and arthritis, and that is why health24.com recorded gain because it is able to show full expertise for each of its departments. For sites focusing one one area, their expertise was focused on that area. All I can say is that the new direction is to build sites focused on one area because it is easy to show EAT. All those sites that showed sighs of gain have one thing in common: explored knowledge and topic gap in-depth

Another explanation for the lack of relationship between author EAT and rank change that you observed is that Google is not evaluating author EAT using those signals.

In regards to Inbound Link Trust. Are you saying that sites in general which have low-quality links were hit by this update?

Or are you focusing on the larger publications which were hit due to interlinking websites in their network?

Network patterns for sites large and small

Well, this sucked. It’s now been about 15-20 days since my site got pushed down. Traffic went down 50%, yes 50%. I started working on the site a good 4 months before this update. May update fucked it up heavily but it was back to normal and better within 14 days again.

Now we’re talking 2k+ keyword position drops and that’s just the ones I am tracking.

The site took off like a speedboat from the beginning and had been constantly climbing and getting good links without my help. Its now been stuck down here but I am only at a 15-20% traffic drop now.

Thing is the keywords which I track aren’t really the ones sending traffic as the site was fairly new. But they are still down 1.5-1.6k.

I am not in the health niche, very different to be honest. Would be fun if you wanted to have a look because its crazy over here. I took advantage of the time to silo the site and increase the EAT, just in case.

However, the only other option I can think of right now is that it uses Amazon API to seamlessly integrate the product ads into each article. Most articles on my site has these and there are occasionally 10-2 affiliate links from one article. Yes, its a review/best of product affiliate site.

I just finished writing 5 informational articles in order to lower the affiliate link per article ratio since these will only link internally.

Hoping to see some changes in the coming days as these gets crawled a few times.

Brilliant analysis, thank you- very much looking forward to the Recovery Guide.

Your research confirmed many of my suspicions…it’s always nice to have ‘back up’.

In our highly niche medical sector, the big gainers seem to share the following attributes: mainly large global brands (the ones who would be described as the big players if a customer was asked); visually attractive websites with lots of graphics to text ratio; mainly not too commercial or at least, no overt ads / banners / CTAs.

There were 2 noticeable anomalies: the top ranking is very commercial…but they’re also the biggest brand by far so perhaps it was offset (money talks, huh); 1 very small brand with poor content came out of nowhere to take a top 3 spot for many core keywords – they seem to be following the keyword stuffing practises you mention. Infuriating.

Across all the sites only 1 is frequently citing reputable medical authors, despite this being a heavily regulated industry so I really was highly sceptical about the strength of this EAT element and glad to see other factors recognised in the mix.

That “crappy site that came out of nowhere” pattern is something we’ve observed as well. Mostly due to black hat links with excessive keyword anchor text. I’m hoping/expecting that Google will fix that in future refinements.

Thanks, Matt for this information. We run a health website with experts reviewing the content, We lost around 60% traffic. Whatever you have mentioned completely resonate. Eagerly waiting for a guide on how to recover.

You wrote that “Sites that gained in the latest algorithm update, like Examine.com, UpToDate.com, HealthLine, MedLinePlus.gov, and Drugs.com all managed to earn a significant number of links from mainstream, high TrustRank websites.”

But observe that examine.com dropped in September. Also it had 1.3MPV last year in comparison to 300K this month so a huge drop in general. Drugs.com. – last year they had 53M PV per month but this year it’s only 17MPV per month – so a huge drop in total.

The only sites that consistently continue the up trend are healthline and medical news today (which is owned by healthline). They are having an unbelievable increase in traffic. It seems like all the lost traffic is mostly going to these two. Even WebMD (which is a trusted site) didn’t increase in such a way. In fact WebMd hardly had any increase.

Also observe that healthline has a lot of ad units in each article.

HealthLine is heavy on ads indeed, 12 Adsense units on a single page, plus the newsletter overlay/popup. I can’t really spot a difference between them and sites that got the short end of the stick like e.g. ThePaleoDiet or MensHealth. Neither less ads, nor less obstrusive (above content, multiple sidebar, multiple between content, below content) or deceptive (all Adsense).

There may be other issues with those sites as well, but if you look closely ThePaleoDiet.com, MensHealth and Health.com all have ads masquerading as content. If you don’t see them as a marketing professional, how likely is it that normal users can distinguish? (note that you may see different ads depending on your location)

How to get rank up after that update? It’s a hard one, looking for article regarding that.

That’s Part 3, coming soon…

Hi Matt,

Thanks for the great article. Now I am waiting for part 3.

This is a really thorough analysis backed with real data. The best and the most sensible on the August algorithm update so far. Eagerly waiting for the grand finale.

This article provides a better perspective which is different from what many SEO experts are trying to sell. Nice article. I am seriously looking forward to the part 3.

Hi Matt, This is the best article I’ve seen about the Google update.

You’ve mentioned that semantic optimization is important however my site lost a LOT of traffic even in well optimized articles.

For example (this is not a real example) let’s say that people look for the benefits of apple juice and lemon.

The intention is to read about the benefits of apple juice mixed with lemon. I have an extensive article about it with dozens of sources pointing to scientific studies and it was written by nutrition expert. It was always in the first page (for the past 2 years) but after August update it is at the end of second page.

After August, Google put in the first page articles that discuss the benefits of EITHER lemon or apple juice. There are also 3 extremely low quality and short articles that discuss the mixture of both. Some of them are only 2-3 paragraphs long. These are mainly from generic sites (some are “brands”) that don’t specialize in health and they lack substance.

I have many other articles like the one above – all targeting exactly what the searcher was looking for, written by experts and loaded with medical studies. I am at a complete loss as to what went wrong here in my side.

As aside note – the site is very lean with ads, great design and extremely user friendly.

I REALLY look forward to your next part on how we can fix the issues in our sites.

Glad you found the article useful Ralph, and I appreciate you sharing your experience. We’ve looked at a LOT of sites impacted by Medic so far, and the more we learn, the more we feel that Medic was not just about one thing, like intent or expertise or trust. There were many changes, and they impacted different query types and websites in different ways. I guess that’s why they called it a “broad, core algorithm update”? I hope this data helps you track down the specific issue for your site, and yes Part 3 is forthcoming and will focus on just that (we’ve been so flooded with clients looking for help that publishing blog posts has taken a bit of a back seat for the time being).

Hi Matt, To echo what everyone else has said… best article about the medic update so far! I’ve been looking daily for part 3. Thank you for the amazing data and info!

Thank you for the kind words, Kaycie! I’m sorry that Part 3 has been so long coming… We’ve had so many people contacting us to get help identifying their specific issues and putting together a recovery plan that I haven’t had time to write for the blog. I have ideas and ambitions for a fairly meaty and detailed post on Medic Recovery, just need time to put pen to paper.

Hey Matt, any eta on part three? I think you have a big audience waiting to hear you thoughts on this!

John, we are working on it! It’s been busy at CanIRank but we will definitely let you know once it’s posted!

Great article guys

In general from what I’ve seen the sites that Google marked in August as “bad” keep dropping. The ones that it marked as “good” keep going up.

There has been almost 6 months since medic update and I’ll be surprised if there’s any “fix”.

Happy to proven wrong! But the fact that you still didn’t write the solution part makes me wonder….

In my opinion, the only thing that we can do is wait for another “core” update. The current SERP results seem quite generic where you have the same sites at the top for most of health related queries. I haven’t seen much of recovery stories.

Really interesting article, it helps me in my study about digital marketing and research. Where’s the part 3? :)

Hello, Superb blog!

Part 3 not out yet?

We’re working on it, I promise! :) Thanks for the reminder!

Very nice post!

I am trying to also to build a website with the main niche in the medical sector in mind localized here in South East Asia. The article you have here is every useful and enlightening to web developers, medical online content creators and medical professionals alike.

Great tips in generating traffic and how Google works with the Medic Update.

This would help a a lot once I can get my website up and running. A million thanks for this analysis!

You’re welcome Enrique, we’re glad you enjoyed it!

I’d love to see an update since the changes in March. One of the sites that benefited in August (healthline) lost 40M page views after the latest update. Other sites that benefitted in August had a huge drop in March. Google be crazy!

It’s on our list Dave! We will let you know once it’s up and live!

> Factors that didn’t seem to make a difference

> Author Profiles or Credentials

Very nice. Now I can use this data to substantiate arguments with gurus on Twitter.

Thank you for sharing such great information and we are a google AdWords certified partner too!

Keep up the detailed studies!

Thank you for sharing such great information, do update more!

Thanks for sharing this!

After going over a handful of the blog articles on your website,

I truly appreciate your way of blogging. I bookmarked it to my bookmark website list and will be checking back soon!

Thank you so much for that’s share a good and important article. Really this is the most important for digital marketers to know!

Thanks a lot for sharing this with all of us folks, you guys always post the best content!

Bookmarked!

I found your site on StumbleUpon, and this is excellent! Great article!

Very useful information. Thanks for sharing the information with us.

Thank you for sharing, we are huge CanIRank fans! <3

Great post, thank you so much for taking the time to share your knowledge and wisdom.

Thank you for sharing this wonderful post.

Thanks for sharing great content.

Wonderful post, keep it up.